To handle business requirements, we have to do a lot of customization in the Salesforce Application. Mostly, we customize apex code for record DML operations, such as when the record is created or updated, performing some business logic, or when deleting a record, performing some validation logic. This customization adds complexity to our application, and if it is not coded well, it will impact our application’s performance. This post will help in Optimizing Salesforce Apex Code, which is added for handling business requirements.

Below are all possible code optimizations that can be made while querying it or performing DML operations in apex code.

- DML or SOQL Inside Loops

- Limiting data rows for lists

- Disabling Debug Mode for production

- Stop Describing every time and use Caching of the described object

- Avoid .size() and use .isEmpty()

- Use Filter in SOQL

- Limit depth relationship code

- Avoid Heap Size

- Optimize Trigger

- Bulkify Code

- Foreign Key Relationship in SOQL

- Defer Sharing Rules

- Use collections like Map

- Querying Large Data Sets

- Use Asynchronous methods

- Avoid Hardcode in code

- Use Platform Caching

- Use property initializer for test class

- Unwanted Code Execution

In the code practice series, we will discuss each of the above approaches. In this post, let’s examine the first good code practice (DML or SOQL Inside Loop).

DML or SOQL Inside Loops:

SOQL is a query language that retrieves data from salesforce objects and DML is a data manipulation language that inserts/updates or deletes records in Salesforce Object.

What problem can occur If we use DML or SOQL in loops?

Salesforce has a government limit of 100 SOQL and 150 DML in one transaction. If we write code that will execute a query over 100 or DML over 150, we will get Error: System.LimitException: Too many SOQL queries: 101 or System.LimitException: Too many DML statements. So we should code so that it does not reach that threshold.

So let us see examples of where we can face limit exceptions and what ways we can avoid DML/SOQL inside the loop.

Use Case:

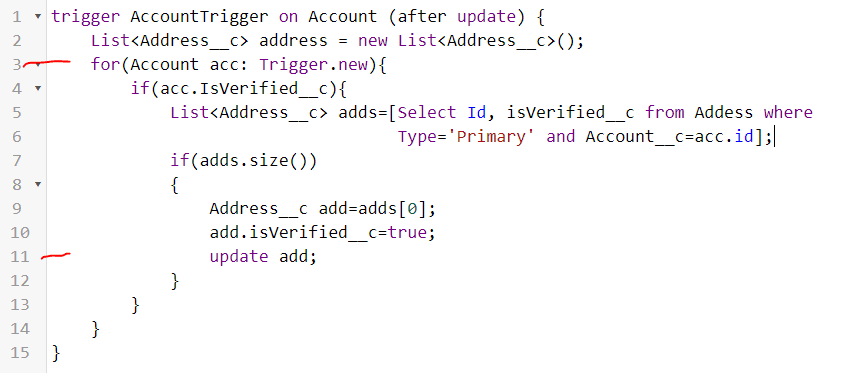

Take an example. We have an Account object that holds insurance customer information. When a record is updated, we will update the related address object. This is a very simple requirement, and we can write the code below for it. Yes, we can do this using flow, but for understanding, we will write code.

If we see the above code, it will perfectly work when you take action from a UI like the lightning page, as it will take action for a single record only. But assume later on that the account object will be updated from any data import tool or it will be updated in a batch job that will update thousands of records at once. In that case, will the above code work?

No, it will not work and throw the above-mentioned System.LimitException error. Why? If you look at the above code carefully, you will see that we are using SOQL in a loop, and we can only query 100 SOQL in one transaction, so the above code will not work. So, how do we handle this SOQL limit exception?

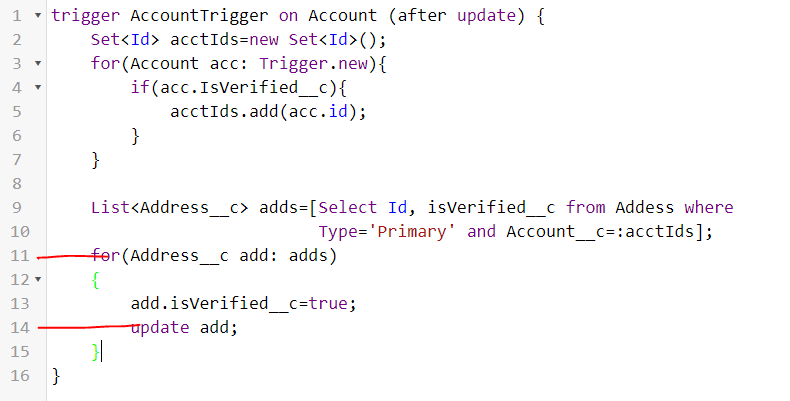

We can resolve the SQOL limit exception by getting all account IDs in the loop and using those account IDs in SOQL once (like lines # 3-10). Now, the above code will not throw exceptions as we have handled SOQL inside for loop issues. But when this trigger executes from the data update tool, it will still throw Too many DML statements exception when records are more than 150 to update. Let us handle that exception also

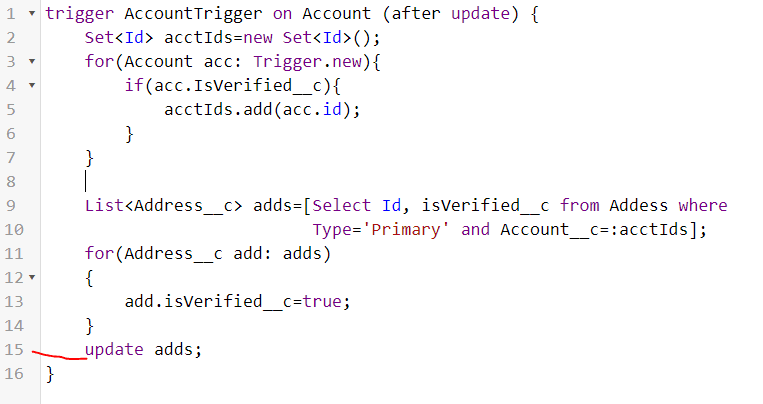

Instead of updating records in a loop, we can update fields in a collection and update an object at once (code lines # 11-15). Now, our code is good in respect to DML or SOQL inside a loop. Our SOQL is not in the loop, and DML is also not in the loop. There is still one issue in the code related to unwanted code execution, which we will see in later posts.

Summary:

Apex code executed in an atomic transaction. When we code, we should avoid more than 100 SOQL or 150 DML statements in a single transaction. While coding, we should never think about single record processing; always code that will work in bulk record processing. We should also consider that a particular code block can be executed from other record insertions or updations. Like account trigger can be fired from contact record updation also, so no of SQOL can increase if we have multiple dependent objects. We will see these fixes in later posts.

This optimization technique is also applicable to flow execution. If we have created a record trigger flow, then that flow will execute for each record when the bulk record is processed. So, a similar approach is required in flow.

Similar Posts:

- Avoid Batch Apex and Use Queueable Class

- How to Correctly Publish Platform Event Using Salesforce Apex

- How to Manage Technical Debt in Salesforce

- Best Practices to Avoid Hardcoding in Apex for Cleaner Salesforce Code

- Best Code Analysis Tools For Salesforce Development

- Implementing Apex Cursors for Optimal Resource Management in Salesforce

- Salesforce DevOps for Developers: Enhancing Code Quality and Deployment Efficiency

- Dynamic Code Execution using Callable Interface

- Apex Code Coverage In Custom Object

7 Comments

Pingback: Optimize Code by Disabling Debug Mode | SalesforceCodex

Pingback: Optimize Apex Code by Metadata Caching | SalesforceCodex

Pingback: Optimize SOQL Filter in Apex Code | SalesforceCodex

Pingback: Queueable Vs Batch Apex In Salesforce

Pingback: Boost Performance of Salesforce Service Cloud - Vagmine Cloud Solution

Pingback: Questions for Tech Lead/Salesforce Architect Interview

Pingback: Integrate Google reCaptcha v3 into the Salesforce Sites